I promised myself I wouldn’t get too animated about this column. I knew as soon as the act came into power, that the checks came into force, and that VPNs started to be targeted that whatever I could add to the conversation would be limited. There’s plenty that’s already been said about the UK’s Online Safety Act, about the petition to have it at least debated, the current government’s response to that, and its subsequent sibling legislation currently filtering its way through most modern Western democracies. And there’s far better analysis than I can muster on it from the usual suspects, so I won’t retread too much of that ground here, no sir.

Privacy, and your right to it, is king. It is absolute. Enshrined in all manners of conventions and legislation, from the ECHR to the US Constitution to the Geneva Convention and the UK Human Rights Act as well. Although in some of those cases it's not explicitly stated, the courts have time and time again acted and interpreted those amendments, laws, and provisions in the interest of their citizens to protect privacy. Of course with caveats, in terms of national security, public health, and such, but still, it is protected. Your personal details. Your private life.

Snake Oil Salesman

I’m not telling you anything new here, I know that, but as you know, our modern era is so intricately intertwined with the internet and its comings and goings that it too is equally filled with those who would do you harm. Those who are looking to profit from your misfortune, to impersonate you, to steal your information, and eventually your money, in one form or another. Unlike any other point in time, this giant web of social networks and sites has made it possible to reach an unprecedented number of people and to access information on those individuals.

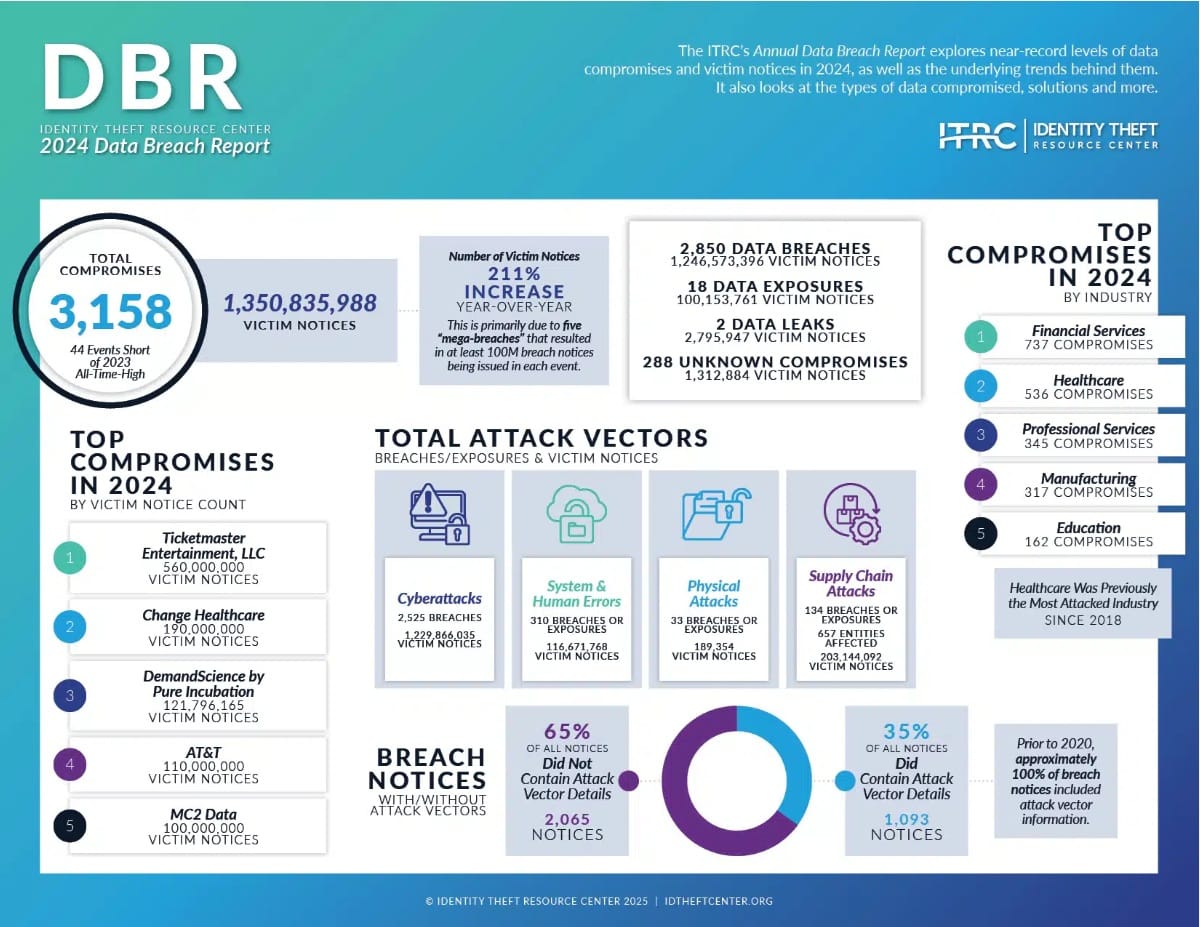

This isn’t like days of old, where a snake-oil salesman was limited to each village he visited, not even close. Heck, a quick online search will show you this year alone has seen multiple database breaches. Qantas had the information of 5.7 million customers stolen, the Co-op in the UK, 6.5 million, and there’s the older data too. According to the Identity Theft Resource Center, in 2024 alone, there were 3,158 separate data breaches, affecting 1.35 billion individuals worldwide. You can find the full report on that here.

That’s a problem, particularly when it comes to how sites now have to verify a user’s age. And to be clear, this isn’t just pornography sites either. We’re talking about any site that in any way might contain content (particularly imagery) that references suicide, self-harm, eating disorders, bullying, hate content, violence, injury, dangerous stunts, challenges, or exposure to substances. And that extends to comments and content too, stuff not published by the site directly. An entire spectrum of content not precisely defined in the legislature.

Broad Definitions & Punishment

You could be giving nutritional advice, or I could write a column on my struggles with mental health or the time I was mugged at university, and if that page had comments or the opportunity to respond and someone from the UK did with a meme or even just an emoji, it could then mean that the entire site would be potentially susceptible to the act. At least if it didn’t have age verification on that content.

The consequences of failure to comply are numerous too. As a company you could be fined up to 10% of your global revenue (not profit, revenue), or £18 million. Have your site restricted from being available in the UK, and have payment providers and advertisers forced to withdraw their services. Plus, if you fail to provide the relevant information Ofcom requests, as a senior manager, you could be taken to court to face criminal charges as well.

It doesn’t matter how big the site is or where it’s located; if you’re in any way UK-facing, you’re now susceptible to it. You need to perform risk assessments. Look at what content you’re producing and how people can interact with that. Certainly from the UK. Many sites are just outright geoblocking their platforms to Britain, as it’s not worth the risk. I can understand that, as I considered it myself while building PC Blueprints, you know, despite being British. The problem is that naturally darkens the web for those of us in the UK. It’s a form of self-censorship, and it’s painful to see.

A Flawed Strategy

Here’s the thing: on the surface the aim is an admirable one. It doesn’t take much research to identify that we’re seeing a massive rise in the number of self-harm and suicide cases. Mental health conditions and depression among the young have erupted over the last twenty years. Particularly among teens as they battle with an ever-changing, interconnected, yet depressing world, all while dealing with the hormonal changes that naturally come inside a brain that hasn’t evolved as rapidly as our technology has. Although I would like to point out, this isn’t something that’s purely isolated to the young either.

Online discrimination and exposure to extreme content is and absolutely should be something we tackle, but this isn’t the way to do it. You’re effectively forcing multiple generations of adults in the UK (again, those who aren’t supposedly vulnerable to this content) to submit and part with their facial recognition data, or passports, driving licenses, and more, to unfamiliar third parties to store, hold, and verify your information with. Purely to engage with platforms that, before this year, were often helpful communities and small beneficial information hubs, as well as the massive social media giants and extreme content sites.

Now the argument is that this data is only stored temporarily and used to effectively tick a checkmark on an account on that site, and that does all sound good on paper to those that don’t understand, but time and time again, we’ve seen hacks that target exactly that information. Either in transit, or locally stored on systems even temporarily.

The Meltdown bug as an example, discovered way back in 2017, allowed some programs (malicious or otherwise) to actively read everything stored in volatile memory and in cache, even with limited permissions, without much trouble. It affected Arm processors as well as Intel, you know, those two brands that power the majority of the world’s servers.

The Costs are Horrific... so far.

The issue I have is that this act, doesn’t fix the problem. It’s like seeing a rise in homelessness and then, instead of helping those out of homelessness, just banning it instead. To be clear, the UK government alone, with Ofcom in tow, has spent a gargantuan amount on this too. Preparation by Ofcom for the implementation of the act totalled an estimated £169 million (by its own estimates). The UK government expects it to cost anywhere between £99 million and £241 million over a ten-year period. Which is all very odd when you consider the bulk of the costs should be borne out by tech companies and sites (again, an estimate of anywhere between £1.34 billion and £2.5 billion over the next decade, which is surely great for the economy).

Why this couldn’t have instead been invested in a massive educational campaign on internet safety, on social education programs, on advice and information for parents, on mental-health access for young adults, and more is beyond me. Instead of these draconian measures, that money could have done a lot more good by addressing the causes of the problem, rather than endangering the voting public’s privacy and setting up the perfect breeding grounds for malicious actors to hunt for passport details and credit card numbers, while failing to limit the accessibility of that content to begin with. Because you know, VPNs.

The Election Cycle

The majority of the comments I’ve seen, or the analogies made, tend to refer to 1984 by George Orwell as a good comparison for what’s going on here, the Big Brother state watching your every move. But there’s something a touch older that resonates perhaps a bit more pressingly, and that’s the words of Charlie Chaplin in The Great Dictator: “Don’t give yourselves to brutes. Men who despise you, enslave you, who regiment your lives. Tell you what to do, what to think, and what to feel.” That speech has always sat heavy on my mind over the years. I frequently go back to it and for good reason.

It’s this that’s the most terrifying to me. It’s the fact that the specific wording in this act is so broad. The definitions so vague. The application so wide-ranging. That it’s naturally damaging to freedom of expression, freedom of speech. There’s almost an emphasis now to second-guess what you write, what you publish. Even for journalists like myself.

Yes, there are elements in place in the act designed to safeguard journalism that contributes towards the public discourse, and yet I’m still sitting here, poring over this very article, wondering if it’s worth the risk to mention self-harm or other “extreme” topics. Even just in discussion of what this act bans. Self-censorship. And that’s with a centrist political party currently in power.

Worst-case scenario then. What happens in four years time, eight years? When the world inevitably becomes more turbulent. What happens if an extremist party is elected in the UK and consolidates power, or multiple of these demolish democracy globally, or a party just adjusts the definitions of what that harmful content is, or strips back the journalistic protections? I get it, these are “What if” scenarios, whataboutisms, but even so.

With an aging population susceptible to cybercrime and a younger generation vulnerable to misinformation and with easier access to content than ever before, with or without the act, it’s vital we educate more people on how to engage with the internet, how to identify fraud, how to judge extreme content. And right now, we’re not doing that. As a society we are failing, and the results, well, it ain’t going to be good.